Application of PDCA Cycle in AI Development and Improvement

Introduction

PDCA Cycle in AI, The world of artificial intelligence (AI) is evolving at a pace faster than many organizations can manage. From healthcare to finance, manufacturing to education, AI systems are becoming deeply embedded in how modern society operates. Yet, with this rapid growth comes a challenge: how do businesses and institutions ensure that these systems remain reliable, ethical, and continuously improving? This is where the PDCA Cycle—Plan, Do, Check, Act—steps in as a structured method for sustainable AI development and enhancement.

The PDCA methodology, originally introduced by W. Edwards Deming for quality management, is designed as a loop for continuous improvement. What makes it powerful in AI contexts is its ability to provide a disciplined approach to iteration. AI systems are not static; they evolve as they learn from new data, adapt to dynamic environments, and respond to user feedback. Applying the PDCA cycle ensures that AI systems remain transparent, efficient, and aligned with business and societal goals, while avoiding risks such as bias, unreliability, or unintended consequences.

In this article, we will explore the application of PDCA in AI development in detail. We will unpack each stage of the cycle—Plan, Do, Check, and Act—while looking at its practical role in machine learning projects, natural language processing models, autonomous systems, and enterprise AI adoption. Along the way, we will examine real-world case studies, identify challenges, and analyze how PDCA fosters continuous improvement in AI. By the end, you will see why PDCA is not just a theoretical framework, but a practical necessity for guiding AI innovation responsibly and strategically.

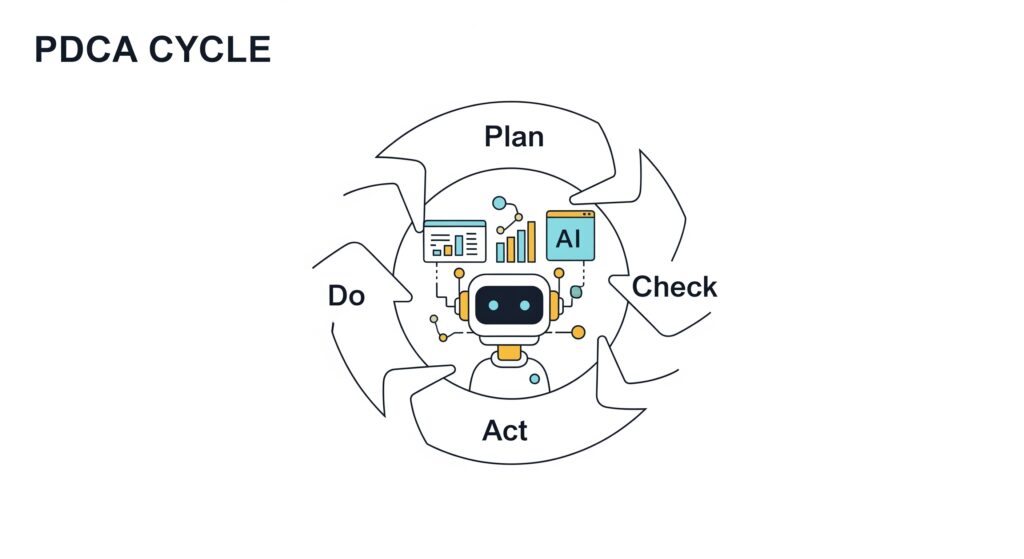

Understanding the PDCA Cycle in the Context of AI

The PDCA Cycle consists of four iterative phases:

- Plan: Establish objectives, analyze data requirements, identify constraints, and design AI development strategies.

- Do: Implement the strategy by building, training, and deploying pilot models.

- Check: Evaluate outcomes against expected performance metrics, ethical standards, and compliance requirements.

- Act: Standardize improvements, scale successful systems, and adapt based on feedback before restarting the cycle.

In AI, this cycle aligns perfectly with the experimental and iterative nature of model building. Unlike traditional software development, AI projects are highly data-driven and require repeated refinements. For example, a computer vision model for detecting tumors in medical scans cannot succeed after a single round of training; it needs careful planning, controlled testing, rigorous validation, and adjustments before being trusted in clinical settings.

The PDCA cycle transforms AI development from a one-off project into a continuous learning system, where algorithms, datasets, and outcomes are consistently monitored and improved. This reduces risks, increases transparency, and ensures better scalability of AI innovations across industries.

Plan: Setting the Foundation for AI Development

Defining Objectives in AI Projects

The Plan stage is where everything begins. Organizations must articulate clear objectives for the AI system. For example, if a retail company wants to deploy AI-driven demand forecasting, its goals may include reducing stockouts, minimizing inventory waste, and improving customer satisfaction. Without well-defined objectives, AI models often fail to deliver measurable business value.

Data Strategy and Ethical Considerations

PDCA Cycle in AI, Data lies at the heart of every AI project. During planning, teams evaluate the availability, quality, and diversity of data. They must also address issues like data bias, which can lead to unfair outcomes. For instance, a recruitment AI trained on biased historical data could unintentionally perpetuate discrimination. By identifying these risks early, organizations can design corrective strategies such as data augmentation or bias detection tools.

Aligning with Regulations and Standards

AI is increasingly subject to regulatory oversight, such as the European Union’s AI Act or sector-specific guidelines in healthcare and finance. In the planning stage, compliance is embedded into the project roadmap. For example, a healthcare startup creating AI diagnostics must adhere to HIPAA for patient privacy while ensuring explainability for medical professionals.

Example: Planning for Autonomous Vehicles

In autonomous vehicle development, planning involves setting safety standards, determining acceptable error thresholds, and defining testing conditions. Engineers map out scenarios such as pedestrian detection, traffic sign recognition, and emergency braking. Without such comprehensive planning, the risks of real-world accidents increase dramatically.

The planning stage is not just about technical design; it is about building a shared vision that connects business goals, technical feasibility, ethical considerations, and compliance requirements.

Do: Building, Testing, and Deploying AI Prototypes

Controlled Implementation of AI Models

The Do stage transforms strategy into action. In AI, this means building models, training them on data, and deploying them in controlled environments. Instead of rolling out an AI solution on a large scale immediately, organizations often start with a pilot project or prototype.

For example, a bank developing AI for fraud detection might first test its model on a limited set of transactions. This allows developers to identify weaknesses before exposing the system to millions of real customers.

Tools and Technologies

Modern AI development leverages a range of tools during the Do stage:

- AutoML platforms for rapid prototyping.

- MLOps pipelines for managing experiments and versioning models.

- Cloud-based AI services for scalability and distributed training.

These tools ensure that experimentation is efficient and that results are repeatable.

Example: Healthcare Predictive Analytics

In healthcare, AI predictive models are tested in controlled hospital settings before wider deployment. A hospital might use AI to predict patient readmissions for one department. Once the system proves accurate and safe, it can be expanded across the organization. This cautious rollout minimizes risks and protects patient safety.

Importance of Iteration

PDCA Cycle in AI, The Do phase emphasizes that failure is not the end but an opportunity for learning. AI teams must be prepared to run multiple experiments, test variations of algorithms, and refine datasets. The iterative mindset aligns naturally with PDCA’s cycle of continuous improvement.

Check: Evaluating and Validating AI Outcomes

Measuring Model Performance

The Check stage is where outcomes are rigorously compared against the original objectives set in the Plan phase. AI models are evaluated using performance metrics such as accuracy, precision, recall, F1-score, and ROC-AUC. However, performance alone is not enough.

Ethical and Social Audits

Evaluation must also address bias, fairness, and transparency. For example, an AI loan approval system may achieve high accuracy overall but unfairly deny loans to applicants from certain demographics. Checking for fairness ensures that AI systems are not just effective but also ethical.

Explainability and Trust

Stakeholders often require explainable AI (XAI). A model that predicts a cancer diagnosis must provide interpretable reasoning for doctors to trust it. Without explainability, AI adoption faces resistance, particularly in sensitive sectors like healthcare and law.

Real-World Case: Loan Approval AI

A financial institution once tested an AI for approving loans. While the system performed well in simulations, the Check stage revealed biases in approval rates. This insight allowed the bank to retrain the model with more representative data before scaling deployment.

Continuous Monitoring

PDCA Cycle in AI, Checking does not end after deployment. AI systems must be monitored continuously to detect model drift, where performance declines as real-world data changes. For example, a retail recommendation engine may become less effective when consumer trends shift. Ongoing evaluation ensures that models remain relevant and reliable.

Act: Standardizing, Scaling, and Improving AI Systems

Scaling Successful Models

Once an AI system passes evaluation, the Act stage focuses on scaling improvements. If a prototype performs well, it can be deployed across larger datasets, broader user groups, or additional organizational functions.

For example, a manufacturing firm might expand its predictive maintenance AI from one production line to an entire factory network after successful trials.

Institutionalizing Learning

The Act stage is also about institutionalizing what works. This involves documenting best practices, creating standardized workflows, and training staff to work with AI systems. Organizations that institutionalize learning build a culture of continuous improvement, where AI evolves in tandem with business processes.

Adapting to Change

AI operates in dynamic environments. The Act stage prepares organizations to adapt quickly by retraining models, updating datasets, and integrating new algorithms. For instance, cybersecurity AI systems must act swiftly on new threat patterns by retraining models and deploying defenses.

Restarting the Cycle

After acting, the PDCA cycle begins again. Each iteration improves upon the last, ensuring that AI systems grow more accurate, ethical, and reliable over time.

Real-World Applications of PDCA in AI

AI in Healthcare

Hospitals use PDCA for AI diagnostic imaging tools. They plan by setting accuracy benchmarks, deploy models in controlled settings, check outcomes against radiologists, and act by retraining models with new patient data. This cycle ensures that diagnostic tools continuously improve and maintain patient trust.

AI in Finance

Banks apply PDCA in fraud detection and credit scoring. They plan by defining acceptable risk levels, deploy AI on transaction subsets, check results for false positives and bias, and act by refining thresholds. Continuous loops keep financial systems secure and fair.

AI in Manufacturing

Smart factories use PDCA in predictive maintenance. They plan machine downtime reduction goals, deploy AI for a limited set of machines, check accuracy of predictions, and act by scaling the system plant-wide. The cycle keeps production efficient and cost-effective.

AI in Education

EdTech companies apply PDCA for personalized learning platforms. They plan by setting learning objectives, deploy adaptive models to small student groups, check engagement data, and act by refining lesson recommendations. This ensures learning experiences remain engaging and effective.

Advantages of Using PDCA in AI Development

- Risk Reduction: Flaws are caught early before they scale.

- Continuous Improvement: Iterative cycles ensure AI evolves responsibly.

- Ethical Safeguards: Structured evaluation reduces bias and increases fairness.

- Scalability: Successful models can be confidently expanded.

- Stakeholder Alignment: Clear stages build trust across teams and regulators.

Future of PDCA in AI Governance and Innovation

The importance of PDCA will grow as AI technologies like generative AI, autonomous systems, and large language models spread. With increasing regulatory scrutiny, organizations must show that AI systems undergo structured evaluation and continuous monitoring.

PDCA Cycle in AI, PDCA will also serve as a foundation for AI governance frameworks, ensuring accountability while enabling innovation. As AI continues to reshape industries, PDCA will remain a cornerstone of responsible and adaptive development.

Conclusion

The PDCA Cycle is more than just a quality management tool; it is a strategic framework for AI development and improvement. By cycling through Plan, Do, Check, and Act, organizations can ensure their AI systems remain effective, ethical, and continuously improving.

In a world where AI innovation moves quickly, the PDCA cycle provides balance—enabling progress without sacrificing accountability. From healthcare diagnostics to financial fraud detection, from predictive maintenance to personalized learning, PDCA empowers organizations to harness AI responsibly and sustainably.

The future of AI will depend not only on breakthroughs in algorithms and data but also on the discipline of frameworks like PDCA that ensure these technologies evolve in the right direction.

For more insights, visit the ClayDesk Blog: https://blog.claydesk.com