What is Model Context Protocol (MCP) Server

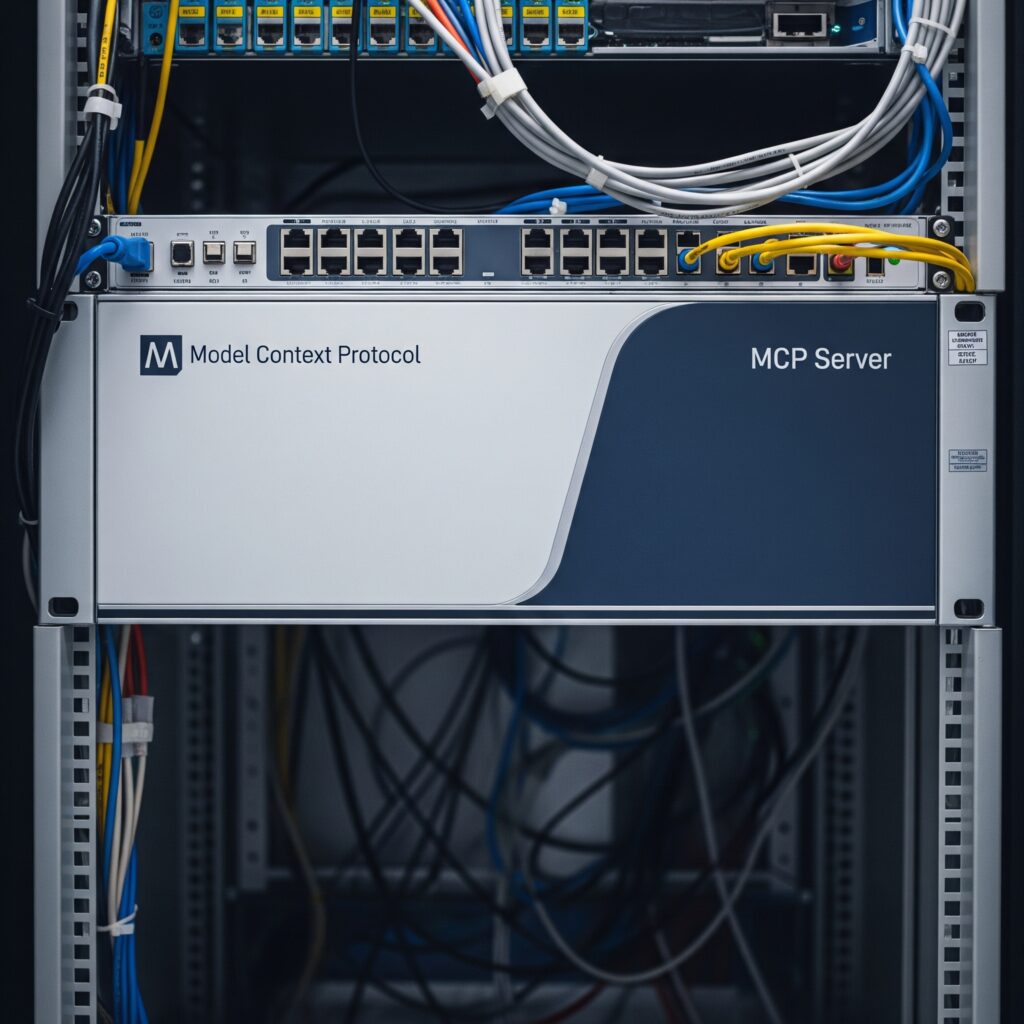

The article introduces the Model Context Protocol (MCP) Server, a cutting-edge solution for synchronizing context across distributed AI agents, machine learning pipelines, and dynamic services.

This protocol enables seamless coordination, shared state awareness, and smarter decision-making at scale.

The Model Context Protocol (MCP) Server is an advanced protocol designed to synchronize real-time context across AI models, agents, and cloud services.

It supports seamless communication in distributed environments, improving coordination, personalization, and inference efficiency.

Key benefits of the MCP Server include:

- Real-time context synchronization across AI components

- Native integration with ML frameworks like TensorFlow and PyTorch

- Role-based access to context streams

- Embedding-level communication for LLM chains

- Therefore, support for both stateless and stateful model memory

As demonstrated in, this architecture is ideal for deploying agentic AI systems, conversational models, dynamic recommendation engines, and context-sensitive automation.

Moreover, MCP Server also works effectively within microservice-based cloud environments. The technical walkthrough on outlines how it connects stateless APIs, serverless functions, and containerized apps through a unified context layer.

Thus, the use cases discussed in range from e-commerce personalization and fraud detection to autonomous robotics and multimodal LLM coordination. It enables multiple agents or models to act on the same contextual reality, ensuring aligned decisions and faster adaptation.

Thus, to better understand how the MCP Server fits into real-time AI pipelines and future-ready architectures, visit for the full video demonstration.

Hence. for implementation strategies, best practices, and advanced integrations, the video at is a recommended starting point for developers, architects, and AI teams.

Unlike traditional API-based methods or static storage layers, the MCP Server acts as a real-time AI context management server. It facilitates seamless context synchronization among intelligent agents, machine learning models, and cloud-based microservices.

Whether you’re building multi-agent AI architectures, coordinating large language models (LLMs), or deploying adaptive decision engines, the Model Context Protocol (MCP) system ensures that every component operates with a shared understanding of state, user intent, and environment.

Additionally, what sets the MCP Server apart is its ability to unify and broadcast context—not just data—across systems in motion. It serves as a real-time model coordination server, enabling interoperability, personalized user experiences, and smarter decision-making at scale.

Hence, rather than being just another server layer, the Model Context Protocol (MCP) Server acts as a centralized, high-efficiency protocol engine. It enables seamless model context exchange across large-scale AI infrastructures, machine learning pipelines, and enterprise data systems.

Above all, designed to handle dynamic, state-aware environments, the MCP Server ensures intelligent agents and models remain synchronized, adaptive, and contextually aware in real time.

But what exactly is the MCP Server, how does it work, and why is it gaining attention across industries like healthcare, finance, logistics, and conversational AI?

Moreover, let’s dive deep into what makes the Model Context Protocol (MCP) Server essential in today’s tech-driven environment—and how it’s revolutionizing the next generation of context-aware artificial intelligence.

Rather than being just another server layer, the MCP Server acts as a centralized, high-efficiency protocol engine. It enables seamless model context exchange across large-scale AI infrastructures, machine learning pipelines, and enterprise data systems.

But what exactly is it, how does it work, and why should organizations care? Let’s dive deep into what makes the MCP Server essential in today’s tech-driven environment.

Understanding the Model Context Protocol (MCP)

The Model Context Protocol (MCP) is a framework designed to manage and distribute contextual information between computational models, applications, and services. This isn’t just another messaging protocol. It focuses specifically on sharing contextual metadata, embeddings, vector data, and stateful model environments across distributed systems in real time.

While APIs and RESTful services are excellent at passing static data, MCP excels at enabling systems to adapt and understand intent dynamically. This context-sharing layer is critical for applications that depend on real-time decision-making, such as recommendation engines, robotic automation, fraud detection systems, and large language model orchestration.

Why Context Matters in Modern Systems

Therefore, context is everything. Whether you’re powering a virtual assistant or training multimodal AI agents, context determines how a system interprets inputs and reacts to its environment.

In traditional systems, context is either hardcoded, localized to specific sessions, or stored in silos. That’s inefficient and prone to inconsistencies. MCP Servers solve this by centralizing context flow, allowing multiple agents and models to stay aligned on a shared reality.

This is especially useful in sectors where data interoperability, real-time inference, and user personalization are essential—think of healthcare diagnostics, smart manufacturing, and autonomous systems.

Key Features of the MCP Server

1. Real-Time Context Synchronization

MCP Servers enable systems to synchronize contextual data in real time. This feature is vital when multiple agents need to collaborate or share state across distributed environments.

2. Compatibility With AI/ML Pipelines

The MCP Server integrates naturally with machine learning frameworks, including TensorFlow, PyTorch, and scikit-learn. It serves as the glue between models, allowing data scientists to build multi-model systems that communicate seamlessly.

3. Contextual Embedding Exchange

Instead of merely passing parameters or static outputs, MCP supports embedding-level communication. This is incredibly powerful for systems using semantic search, vector databases, or LLM chaining frameworks like LangChain and Semantic Kernel.

4. Security and Role-Based Context Access

MCP provides role-based context access control. Not every system should get every piece of contextual data. With embedded security protocols, MCP ensures compliance, data governance, and multi-tenant safety.

5. Stateless and Stateful Support

Whether your architecture favors stateless microservices or persistent model memory, the MCP Server can handle both. It supports transient context passing and long-lived context memory, which is crucial for AI agents, chatbot frameworks, and personalized UX systems.

How the MCP Server Works

Hence, at its core, the MCP Server operates on a publish-subscribe model, where agents and models subscribe to specific context channels. These channels can represent sessions, tasks, or even environments. Once subscribed, any context update is broadcast to all relevant systems in real time.

Additionally, the server manages serialization and deserialization of complex context data using JSON-LD, Protobuf, or custom schema formats. This ensures that context isn’t just passed—but understood.

For instance, in a multi-agent warehouse management system, a navigation bot may pass real-time location and task status as context. A central model predicting delays can instantly factor in this context to reassign tasks across robots.

Use Cases of the MCP Server in Real-World Applications

1. AI Agent Collaboration

Multi-agent AI systems are growing in popularity. Whether it’s autonomous trading bots, intelligent tutoring systems, or automated research agents, these agents need to share information constantly. The MCP Server ensures agents operate with shared context, reducing redundancy and improving coordination.

2. Dynamic Recommendation Engines

In e-commerce, recommendation models often rely on outdated session data. With MCP, user behavior, purchase intent, and interaction feedback are updated in real time. This allows the recommendation engine to adapt on the fly and deliver hyper-personalized results.

3. Intelligent Customer Support

Whereas, conversational AI platforms built with large language models (LLMs) need contextual awareness across sessions, devices, and services. MCP Servers offer a way to stream context between chatbots, CRM tools, and backend services, enhancing user experience.

4. Contextual Model Fine-Tuning

Thus, MCP enables on-the-fly fine-tuning by injecting live user feedback, environmental changes, or workflow metadata. This is essential in industries where adaptive learning is necessary, like financial modeling or smart healthcare diagnostics.

5. Context Sharing Across Cloud Microservices

Cloud-native apps using Kubernetes, Docker, and serverless architectures often suffer from stateless isolation. MCP provides a centralized context bridge, which allows microservices to collaborate without tight coupling.

Here are some Out bounded links :

LangChain – Framework for LLM Applications

https://www.langchain.com

Useful for showing MCP’s relevance in coordinating LLM-based systems.

OpenAI – Developer Documentation

https://platform.openai.com/docs

Helpful in referencing how MCP can interact with models like GPT.

Apache Kafka – Event Streaming Platform

https://kafka.apache.org/

Relevant for real-time context sharing over pub-sub architecture.

TensorFlow – Open-source ML Framework

https://www.tensorflow.org

Supports mentioning integration with ML pipelines.

Benefits of Using an MCP Server

Improves Model Coordination

Instead of models working in silos, they can operate as part of an intelligent mesh, where context is continuously flowing and shared. This reduces latency in decision-making and enhances model synergy.

Enhances Real-Time Adaptation

Systems can adapt their behavior not just based on new data, but also on changing situational awareness, improving resilience and responsiveness.

Reduces Redundancy

Therefore, by sharing context, models don’t need to reprocess the same inputs. This saves computational power and reduces inference costs, especially in large-scale deployments.

Increases Personalization

Because the MCP Server maintains state and history across systems, applications can offer truly personalized experiences that evolve over time.

Technical Architecture Overview

Hence, the MCP Server typically consists of the following components:

- Context Broker: Routes contextual data between producers and consumers.

- Context Registry: Maintains schema definitions and access policies.

- Persistence Layer: Optionally stores historical context snapshots.

- Integration APIs: Interfaces for Python, Node.js, Java, or REST.

Optionally, it can integrate with event-streaming platforms like Kafka or MQTT for large-scale applications, and support websocket channels for live context streaming.

When Should You Deploy an MCP Server?

Thus, not every application needs an MCP Server, but it becomes crucial when:

- Your application uses multiple machine learning models or agents that must work together.

- Real-time interaction or live decisioning is a business priority.

- You want to deploy multimodal AI systems (e.g., text + vision + voice).

- Contextual memory across sessions and users matters.

- You’re working on next-generation AI orchestration, like AutoGPT, LangGraph, or agentic frameworks.

MCP and the Future of AI Interoperability

Additionally, as AI ecosystems become more complex, context will no longer be a luxury—it’ll be a necessity. MCP Servers enable a new generation of modular, collaborative, and self-aware systems that mirror human-level coordination.

Therefore, from edge computing environments to cloud-native AI deployments, MCP will likely become a foundational component. As more frameworks and AI infrastructure tools integrate MCP compatibility, the barrier to entry will fall, opening up new possibilities for cross-platform context orchestration.

Challenges and Considerations

Although MCP Servers bring many benefits, there are some considerations:

- Scalability: Ensuring real-time performance under high throughput requires proper infrastructure.

- Security: Sensitive context data must be encrypted and access-controlled.

- Schema Alignment: Defining context schemas that make sense across models can be difficult.

- Debugging: Tracing context flow across distributed systems introduces new complexity.

Despite these, the value MCP delivers often outweighs the learning curve, especially for AI-first teams and enterprise platforms.

Final Thoughts

The Model Context Protocol Server isn’t just another piece of backend infrastructure—it’s a fundamental enabler of modern intelligent systems. As AI moves from isolated models to interactive ecosystems, the need for robust, scalable, and secure context management grows.

Hence, MCP fills that gap, offering real-time, state-aware communication that allows models, agents, and applications to work together intelligently.

Whether you’re building next-gen LLMs, deploying autonomous agents, or creating real-time recommendation engines, MCP can help make your systems smarter, faster, and more connected.

If your AI architecture demands coordination, context flow, and real-time adaptability, the MCP Server isn’t just useful—it’s essential.

For more insights, visit the ClayDesk Blog: https://blog.claydesk.com